All Courses

COMING SOON

COMING SOON

COMING SOON

Selection bias

As we’ve seen already, in the real world, only a subset of potential outcomes is observed, while the rest are not. This is the fundamental problem of causal inference. We see only observed outcomes and we can never know their counterfactuals.

We turn now to another causal question: Is a newly-developed surgery for cancer effective?

Our treatment is obvious: it’s the surgery. Those who receive it are in the treatment group and those who don’t are in the control group. Suppose you and a friend collect data on 200 cancer patients all of whom have been informed about this new surgery but only some of whom chose to receive it. You know from the data that 100 patients chose to undergo the surgery and the rest did not. We’ll measure the effectiveness of the surgery by how many years patients live after refusing or choosing to undergo the treatment.

Your friend does the math in an Excel file and compares the average observed years alive of those who chose the surgery to those who didn’t. He takes the difference and finds that those who had the surgery, on average, lived 2 years less than those who didn’t. He tells you that the number he found, -2 years, is the causal effect of this new surgery on life expectancy among cancer patients.

Mathematically, your friend calculates his causal effect like this:

which translates into the difference between the observed outcomes of those who actually ended up receiving the surgery and those who actually didn’t.

The vertical lines in the equate, | , are the notation for “conditional on…” you could also read it as “given that…“.

You don’t buy your friend’s analysis. You know by now that the treatment effect is calculated as the difference in potential outcomes not a difference in observed outcomes. The two are not the same. You tell him there’s something wrong. You can’t simply compare observed outcomes and assert that the difference is the causal effect unless you’re the master of the multiverse, which your friend most certainly is not.

By simply comparing the observed outcomes, we’re not comparing 🍎 to 🍎. For instance, it might be the case that those who elect to have the surgery tend to be high-risk patients and high-risk patients are likely to live shorter whether they have the surgery or not.

In short, your friend’s causal effect is wrong because of the fact that patients choose whether to undergo the surgery and different types of patients may be more or less likely to make the choice to do so. This is called selection bias. Selection bias happens when subjects choose the treatment they want and the assignment of which subjects end up receiving the treatment is not random.

Naive and true causal effects

To see what selection bias is, let’s compare what your friend is calling a causal effect to the correct definition of a causal effect, which you know from the potential outcomes model (aka the RCM model).

No disrespect to your friend, but let’s call his idea of a causal effect the naive causal effect. Holland, the statistician we previously talked about, is more polite and calls your friend’s causal effect the prima facie causal effect, which pretty much just means naive.

The naive or prima facie causal effect only reflects associations and not an actual causal effects. The naive causal effect and the true causal effect are related, but they are by no means the same. Let’s take a look at why.

Remember, we defined causal effect (or more specifically the average treatment effect, ATE) as the difference between average potential outcomes of the treated and the untreated individuals:

where we know is the potential outcome if all subjects received the surgery and is the potential outcome if all subjects did not receive the surgery. To show how the naive causal effect is related to the true causal effect, we’ll have to go through some simple algebraic manipulations.

Remember from above:

We also know…

- For a person who actually underwent the surgery, is their observed outcome.

- For a person who actually did not undergo the surgery, is their observed outcome.

This means, we can rewrite the naive causal effect as:

Let’s add and subtract the term to the equation. This is pure math trick and has nothing to do with causal inference. We can do this because they cancel out each other:

And let’s rearrange everything:

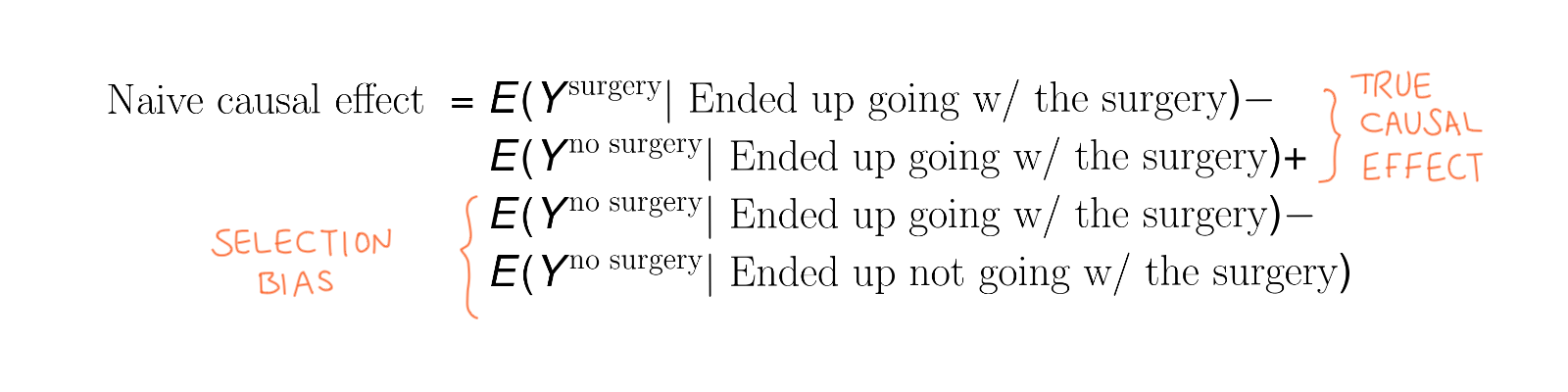

What’s the point of all this? The first two terms are

The first two terms capture a true causal treatment effect (not a naive one) because they show the difference between potential outcomes. So the first two terms capture the causal effect we had in mind.

However, there’s a minor catch! The potential outcomes in the first two terms are conditional on ending up going with the surgery (remember that symbol ”|,” which we talked about above). The first two terms together then capture the causal effect only for the treatment group. We call this, the average treatment effect on the treated (ATT) (as opposed to ATE, which is the average treatment effect across everyone in the sample). Both the ATE and ATT are “true” causal effects, the ATT is simply a causal effect only for the treated.

Now, let’s go over the last two terms. The last two terms are:

This terms is the selection bias we discussed above.

Selection bias

Let’s recap:

Or simply,

As you can see then, your friend’s causal effect (the naive causal effect), which he calculated as the difference between the average observed outcomes of the treated versus the non-treated is not the same as your causal effect. Your causal effect, the ATT, is the difference in average potential outcomes of the treated. The difference between them is called selection bias.

Thinking outside of our surgical example, we can relate naive causal effect, ATT, and the selection bias as follows:

where ATT is

and the selection bias is

In general, selection bias captures what would have been the difference between the outcomes of those in the treatment and those in the control had both not received the treatments. In our example, this indicates the difference in “no-surgery” potential outcomes between those who ended up going with the surgery versus those who did not.

If the term is positive, it means that those in the treatment groups would have higher outcomes had both groups not receive the treatment. In general, if the term is non-zero, there are differences between the two groups.