All Courses

COMING SOON

COMING SOON

COMING SOON

Potential outcomes

Understanding causal relationships boils down to understanding and “seeing” potential outcomes. To understand what this means, let’s explore an example.

Assume we are interested in the causal effect of a training program on an outcome such as how much a person earns after the treatment. To be more specific, let’s say the program is a training program that teaches digital skills to high school dropouts.

As a researcher who cares about policy, you want to know whether enrolling in the program (receiving the treatment) leads to higher future earnings for participants. Note that this is a forward causal question because we are estimating the effect of a specific cause. Also note that the cause here is not an attribute or state but rather an event. The graph below summarizes the causal relationship in which we’re interested.

There are two potential outcomes for each subject in this example. The outcome that would occur if the subject enrolled in the program, and the outcome that would occur if they did not enroll in the program.

Notation

A bit of notation before we go further.

There are only two treatment options in this case, so the treatment is binary:

- A person enrolls in the program (i.e. receives the treatment)

- A person does not enroll in the program (i.e. does not receive the treatment).

Remember binary just means consisting of only two things (heads or tails, 0 or 1, up or down, left or right). In this case, you either get the treatment or you don’t.

We can write this mathematically using the notation for treatment and to represent the -th individual in the study. We’ll say when person receives the treatment and when person does not receive the treatment.

- denotes that individual receives the treatment

- denotes that individual did not receive the treatment

Just as we can denote the two treatment options, we can also use notation to indicate the two potential outcomes. We’ll use for outcomes.

- is the potential outcome for person under treatment

- is the potential outcome for person under no treatment

Ok. Ready to move on…

The road not taken and the multiverse

How would you go about measuring the impact of the digital skills program? In other words, how should you measure the causal effect of enrolling in the program (the treatment) on the future earnings of participants (the outcome)? 🤔

Your first inclination might be to compare the outcomes of individuals who enrolled in the program with the outcomes of those who did not. Nice try, but not so fast.

Here is where potential outcomes come into play.

Imagine Aliyah and Connor are two individuals in your study. Aliyah received the treatment and Connor did not ( and ). You find that Aliyah makes 32,000 dollars per year five years after enrolling in the program and Connor only makes 25,000 dollars a year ( =32,000 and = 25,000).

Is it fair to say that the causal effect of the digital skills program is 7,000 dollars a year (Aliyah’s income minus Connor’s; - )? No, because there are likely many other factors that help explain the difference between Aliyah and Connor’s incomes. As we’ll see soon, these factors get in the way of isolating the causal effect of the treatment (enrollment in the program) on the outcome.

Rather than comparing actual outcomes across participants in the study (Aliyah versus Connor), ideally, you would like to be able to compare the potential outcomes for each individual in the study (Aliyah’s outcome had she enrolled in the study versus Aliyah’s outcome had she not enrolled in the study - , and the same for Connor - ). The trouble with potential outcomes is that once you observe an outcome for a subject you cannot observe any of their other potential outcomes. Once Aliyah decides to enroll in the program, she has made a specific decision; she has wandered down a specific road. You cannot bring her back and observe what would happen if she made her decision differently. This presents a major roadblock in causal inference.

To clarify this point, imagine we live in a multiverse where the choices people make can play out differently in alternate realities 🤯

Since we have imagined that much, let’s also imagine that we are super-causal-inferenitalists endowed with the ability to travel between these parallel worlds to observe what happens in each.

For this study, we would travel between the following two worlds:

- World 0, the world where Aliyah chooses not to enroll in the program

- And World 1, the world where Aliyah does enroll in the program

Everything about World 0 and World 1 is identical except for the single fact that Aliyah has chosen to enroll in the program in one world but not in the other. The magic of all of this, aside from our ability to travel between worlds and aside from the fact that all potential outcomes have now become observable realities, is that all confounders have disappeared 🧙🏻♀️

In comparing World 0 and World 1, there is nothing to explain a difference in outcomes except for the very cause we are concerned with, enrollment in the program. Therefore, a simple comparison between Aliyah’s outcomes in World 1 and World 0 will give us the exact causal effect of the treatment (aka the treatment effect). The same goes for if we were to travel between two parallel worlds for Connor.

This parallel universe framework is imaginary. But it helps us understand causal inference better. This framework in which we estimate causal effects through estimating potential outcomes is called the Neyman–Rubin causal model but mainly known as the Rubin Causal Model (RCM) or simply the potential outcomes model. Therefore, in the RCM, we have two vectors of potential outcomes: one under treatment and one under no treatment.

Average treatment effect

As we just saw in the potential outcome model, we should think of two parallel worlds. In world 0 (the world under no treatment), subjects don’t get the treatment. In the alternative world, World 1, those same subjects get the treatment.

The “perfect” researcher observes both of these worlds and can calculate the difference between worlds for each subject. This is the treatment effect for each subject. Treatment effects may vary from subject to subject (the treatment effect for Connor may be different from the treatment effect for Aliyah).

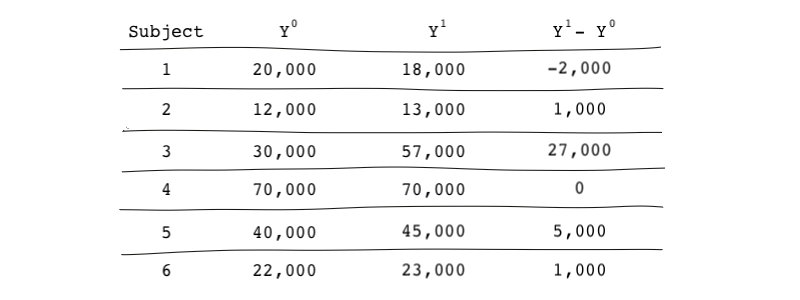

Going back to our digital skills program, imagine we observed potential outcomes for each individual in our study as shown in the table below. Again, is the vector of potential outcomes for all subjects under no treatment (World 0) and is the vector of potential outcomes under treatment (World 1). The vector shows the treatment effect for each subject.

This perfect researcher can then easily calculate what is called the average treatment effect or ATE by calculating the mean of the difference in outcomes under world 1 and world 0. ATE is a form of a causal effect. Therefore, the average treatment effect is the average of all individual treatment effects averaged over all of the subjects in the sample. Mathematically:

Or

is the notation for expected value (or mean in this case).

ATE is comparing what would happen if the subjects in our population were treated versus if the same people were not treated. reflects the average treatment effect of the training program because we’re comparing everybody to themselves; we’re comparing 🍎 to 🍎

This means the effect of participating in the program on future earnings is, on average, 5,333 dollars. The code below shows how we calculate ATE:

y0 <- c(20000, 12000, 30000, 70000, 40000, 22000) y1 <- c(18000, 13000, 57000, 70000, 45000, 23000) # Calculating the average treatment effect ate <- mean(y1) - mean(y0)

import numpy as np y0 = [20000, 12000, 30000, 70000, 40000, 22000] y1 = [18000, 13000, 57000, 70000, 45000, 23000] # Calculating the average treatment effect np.mean(y1) - np.mean(y0) #ate

input y0 y1 20000 18000 12000 13000 30000 57000 70000 70000 40000 45000 22000 23000 end * Calculating the average treatment effect egen avg_y1 = mean(y1) egen avg_y0 = mean(y0) gen avg = avg_y1 - avg_y0 display avg